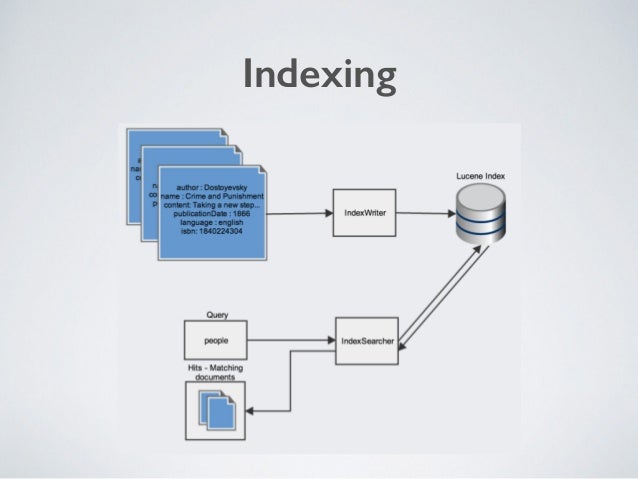

Writer = new PrintWriter( outputFile, "UTF-8" ) String outputFile = "C:/Users/default/test/docs_similarity.csv" /* the output file where the document similarities will be stored */ initialise an in-memory (RAM-based) index of the documents in the input folder using Apache Luceneĭirectory directory = createIndex( lookupFile ) Initialise the field to profile in each document Private ArrayList scannedDocs //stores the IDs of docs already scanned and stored in termsCount Private HashMap termsCount //stores the counts of all terms found in the corpus Public final FieldType TYPE_STORED = new FieldType() //This is the field profiled by Apache Lucene to collect its term frequencies Private ArrayList directoryIndex //The Apache Lucene index for documents to mine for similarities Private Set terms = new HashSet() //changed state with new terms after each call to getTermFrequencies() method Private static final String CONTENT = "Content" /*the name of the field stored by Apache Lucene which includes the text from the A class to find the similarity of all text documents referenced in a text file Import the Apache Commons Maths library classes to handle the term-vectors from Apache Lucene We demonstrate an example of utilizing this library using a sample of code below, where important lines of code are commented accordingly for emphasizing the task executed in the commented lines. Stop-word removal : removing the frequent repetitive words from the analysis which don’t matter in our similarity computation (e.g.in English, a word like “running” will be stemmed to “run”). Stemming : converting the words in a language to its simplest form or stem (e.g.Tokenization : splitting the parts of the document to extract each term individually.

It includes multiple pre-processing functionality which can cleanse and prepare the documents for the text-mining process as follows: The Apache Lucene Java-based library ( an easily integrated API with Maven-based Java projects ) already includes an open-source implementation of the TF-IDF computation mechanisms and the cosine similarity scoring between documents. do not get high importance in the similarity score computation when comparing a pair of documents as much as unique words like “biodiversity”, “algorithm”, “DNA-Microarray”, etc. This allows to give an importance for each term so that very common words like “and”, “to”, “from”, etc. It weights the importance of each term in the whole corpus of documents, where repetitive terms in the corpus get less weight and where more unique and infrequent terms in the corpus get higher weights. The TF-IDF is a very popular information retrieval technique for free-text documents. Compute the cosine-similarity score between each pair of documents (returns a value between 0 and 1, where 0 means most different and 1 means most similar) Compute the TF-IDF weighting-scheme of the frequent-terms for each documentĥ. Profile each document in the index by extracting its frequent terms (usually modeled as a term vector where each term is accompanied with its frequency indicator inside the same document)Ĥ. Create a new Apache Lucene index for the documents you will search for similarityģ. In laymen’s terms, this translates to the following process:ġ. In Apache Lucene, there are supported capabilities to extract the descriptive metadata about the text-documents using frequent-terms extraction, then, for the second part of similarity computation, the project supports cosine-similarity computations between the TF-IDF terms frequencies (a popular information retrieval technique for free-text) for profiling the free-text documents. Both tasks can be handled by an open-source text-mining project like Apache Lucene. to compare the profiles of pairs of documents to detect their overall similarity. profile the documents to extract their descriptive metadata, 2. To handle the challenge of finding similar free-text documents, there is a need to apply a structured text-mining process to execute two tasks: 1. One of the main challenges in such Big Data environments is to find all similar documents which have common information. Nowadays, there are a lot of unstructured data available on the Internet, and more commonly, in Data Lakes (DL) specifically designed for Business Intelligence (BI).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed